You are the CEO of a Fortune 500 company and you have been reading about the Sony Pictures hack news in the media lately. You are worried that your company’s systems may be vulnerable for hack attacks too. You hire IT consultants to make recommendations. They tell you –

- Only 2% of the Fortune 500 companies will experience serious hack attacks in any given year

- Testing system vulnerabilities / weakness is very expensive and will cost you the company’s full year profit to pay for the testing. If you are able to successfully plug the weaknesses, it is definitely worth the cost. If not, you are in serious trouble for wasting shareholders money.

- The confidence level of the tests are very high at 90%. i.e. if there are system weaknesses that make hacking possible, the tests will positively identify them 90% of the time.

Given the high confidence level, the consultants recommend getting systems test done.

As a CEO what’s your decision? How should you approach such a situation and are there models available to help you make a decision?

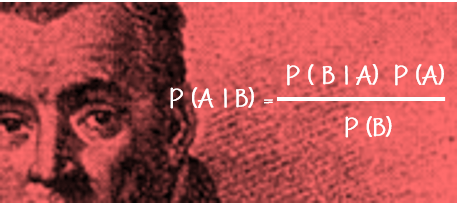

Bayes Theorem can be a good model to think through such situations. This model / theorem was conceived in the 18th century by Thomas Bayes. Bayes’ theorem applies probabilistic mathematical principles to address an essential question: “How should we modify our beliefs in the light of additional information?” Essentially it tells you the probabilities of possibilities.

Nate Silver a statistician correctly predicted outcome of all the 50 states in the 2012 US Presidential elections and he is said to have used Bayes’ Theorem to make predictions.

Let us try to solve the above systems test problem using common sense approach first. The goal being, as a CEO, is it worth spending shareholders money to get the systems test done?

Capturing the information in the table, we see –

| Hack Attack – 2% | No Hack Attack – 98% | |

| Test detects system weakness | 90%

True Positive i.e. Test correctly identifies system weakness while weakness exists that make hacking possible |

10%

False Positive i.e. Test incorrectly identifies system weakness while weakness does not exist |

True Positive Identification of System Weakness –

Number of Fortune 500 companies that will have hack attack – 2% of 500 companies = 10 Companies

True Positive detection of systems weakness by performing the test – 90% of 10 companies = 9 Companies (X)

False Positive Identification of System Weakness –

Number of Fortune 500 companies that will not have hack attack – 98% of 500 companies = 490 Companies

False Positive detection of systems weakness by performing the test – 10 % of 490 companies = 49 (Y)

Total Companies identified as having System Weakness by the Test

(X) + (Y) = 9 + 49 = 58 (Z)

Probability of having a Hack Attack amongst the companies the Test identified as having System Weakness

Percentage of (X) Number of companies that are actually vulnerable for hack attack over (Z) the total number of companies the test identified as having weakness

(X)/(Z) = 9/58 = 0.1552 (approximately 15%)

What does the above percentage mean?

It just means that even if your company is tested positive, there is only 15% chance that your company will actually have a hack attack. As a CEO. I would need more evidence / comfort to act on the recommendations.

Bayes Theorem

Lets see what is Bayes Theorem and how can we bring it to daily use. Using the example above, in simple English, Bayes theorem provides an answer for P(A|B) probability of having a Hack Attack (A) given a Positive Test (B). The equation for solving the problem is –

Other parts of the equation in simple English –

- P(B|A) = Chance of testing positive given the 2% hack attack / target companies i.e. True Positive Test in our example above 90%

- P(A) = Probability of having a hack attack i.e. in our example above 2% of 500 companies = 10

- P(B) = Probability of Positive Test or Total Population of Positive Test. In our example above, total number of companies the test will show as having weakness (i.e. True Positive + False Positive) i.e. 58

Putting it together

P(A|B) = (0.9 * 10) / 58

P(A|B) = 0.1552 (approximately 15%) – same as what we arrived using common sense approach. Just that this formula makes it incredibly easy to arrive at the solution quickly.

Let us apply this learning to another simple problem that I read in Daniel Kahneman’s book Thinking Fast and Slow.

A cab was involved in a hit-and-run accident at night. Two cab companies, the Green and the Blue, operate in the city. You are given the following data

- 85% of the cabs in the city are Green and 15% are Blue.

- A witness identified the cab as Blue. The court tested the reliability of the witness under the circumstances that existed on the night of the accident and concluded that the witness correctly identified each one of the two colors 80% of the time and failed 20% of the time.

What is the probability that the cab involved in the accident was Blue than Green?

Most of us will give the solution as 80%. But it is incorrect. Let us try to solve the problem using what we just learnt.

Capturing the information in the table, we see –

| Green Cab Company – 85% | Blue Cab Company – 15% | |

| Witness identification | 80% – (Witness identifies Green as Green = 0.85*0.8=0.68)

20% – (Witness identifies Green as Blue = 0.85*0.2=0.17) |

80% – (Witness identifies Blue as Blue = 0.15*0.8=0.12)

20% – (Witness identifies Blue as Green = 0.15*0.2=0.03) |

- P(A|B) = Probability of cab being actually Blue given the Witness claims Blue

- P(B|A) = Probability of correctly identifying Blue given Blue cabs= 0.80

- P(A) = Probability of cab being Blue in the city = 0.15

- P(B) = Probability of identifying any car as Blue = 20% of Green cab identified as Blue + 80% of Blue identified as Blue

P(A|B) = P(B|A) P(A) / P(B)

P(A|B) = 0.80*0.15 / (0.20*0.85 + 0.80*0.15)

P(A|B) = 0.12 / 0.29

P(A|B) = 0.4138

i.e. Probability of cab being actually Blue given the Witness claims Blue is only 41%. Not a very high confidence level to rely on witness’ claim.

The above is a total dumbed down version of the application of Bayes Theorem. Personally the learning from this theorem is that it provides a framework to refine my beliefs / hypothesis based on additional evidence. In other words, my Inductive Reasoning will go through more cycles of reasoning before confirming a hypothesis / Theory. Personally I tried to visualize the refinement as a decision tree, a topic of discussion perhaps for some other time. For now, below some are other scenarios where I think this model can be of use.

- Medical tests

- Recruitment especially when you are making hiring decisions based on information gathered

- Sample selections for quality control or audits

- Information based betting and gambling

- Monty Hall Problem can be solved using this model

- Obviously this model was very effectively used by Nate Silver in making Poll predictions as mentioned above. His book “The Signal and the Noise” is on my to-read list for a long time.

Friend, I’ve been studying Bayesian reasoning in depth recently and have been amazed at the applications this simple theorem has. Are you familiar with the LessWrong Community and their writings on Baye’s Theorem? I have already found that this theorem is the my most powerful mental model when confronted by new evidence.

I will leave some of my favorite LessWrong Articles here.

http://lesswrong.com/lw/ul/my_bayesian_enlightenment/

http://lesswrong.com/lw/1to/what_is_bayesianism/

http://wiki.lesswrong.com/wiki/Sequences

As always, I enjoy seeing this overlap of ideas between you and I.

LikeLiked by 1 person

Loved the articles and gave me a new perspective on Bayesian reasoning. I am also reading the autobiography of Albert Einstein and he is a big fan of deductive reasoning. His approach is to build on the knowledge that is already known and further refining it based on new evidence. I am able to connect Bayesian reasoning to Deductive reasoning in more meaningful ways. Perhaps a topic for some future post :)… Here is the short excerpt from the book relating to his approach –

“The simplest picture one can form about the creation of an empirical science is along the lines of an inductive method. Individual facts are selected and grouped together so that the laws that connect them become apparent…However, the big advances in scientific knowledge originated in this way only to a small degree…The truly great advances in our understanding of nature originated in a way almost diametrically opposed to induction. The intuitive grasp of the essentials of a large complex of facts leads the scientist to the postulation of a hypothetical basic law or laws. From these laws, he derives his conclusions.”

His appreciation for this approach would grow. “The deeper we penetrate and the more extensive our theories become,” he would declare near the end of his life, “the less empirical knowledge is needed to determine those theories.” By the beginning of 1905, Einstein had begun to emphasize deduction rather than induction in his attempt to explain electrodynamics. “By and by, I despaired of the possibility of discovering the true laws by means of constructive efforts based on experimentally known facts,” he later said. “The longer and the more despairingly I tried, the more I came to the conviction that only the discovery of a universal formal principle could lead us to assured results.”

LikeLiked by 1 person

I will be looking forward to the future post. I tried to read Einstein’s autobiography many years ago, but a lot of things went over my head. I will give it a second read, the excerpt you quoted intrigues me.

LikeLiked by 1 person